Case Study

nClouds AWS Case Study | Teamworks

Teamworks is a VC-backed startup founded in 2004 that provides an engagement platform built by athletes for athletes. This worldwide collaboration software is designed to make everything easier for elite athletic teams to operate effectively and efficiently – from scheduling and communication, to sharing files and managing travel. It helps more than 3,000 elite athletic teams connect and collaborate so they can focus on winning. Learn more about Teamworks www.teamworks.com.

Industry

Location

The Challenge

Featured Services

Improved performance efficiency

Increased scalability

CHALLENGE

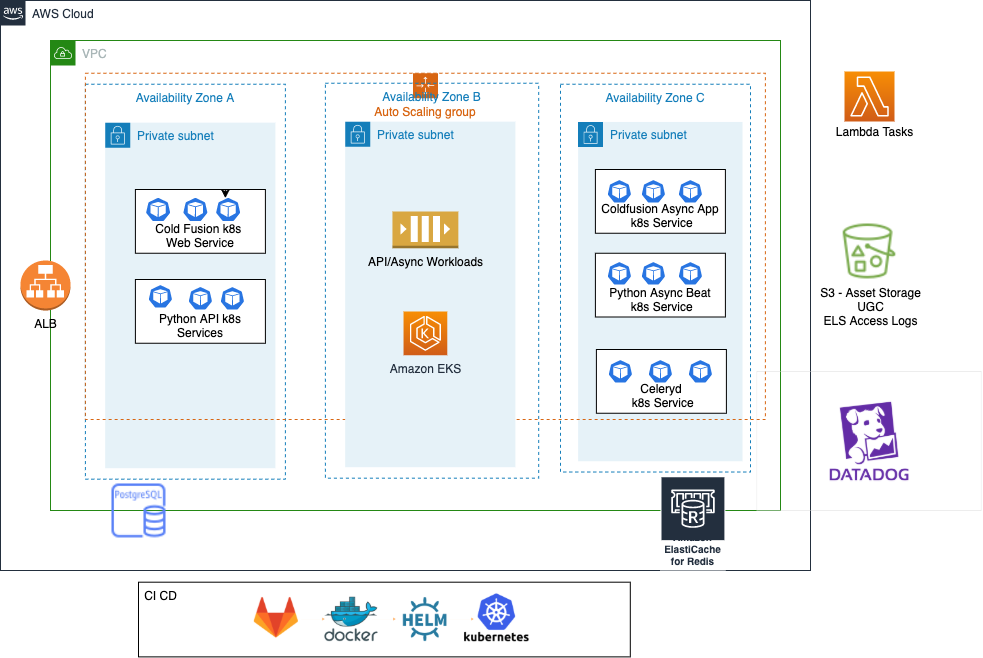

Teamworks was implementing continuous integration (CI) in their architecture and wanted better integration with AWS services to improve performance efficiency and scalability.

“To provide a superb collaboration platform to our customers, it’s critical for the Teamworks app to excel in performance efficiency and scalability. With nClouds’ expertise in migration and DevOps, we were able to optimize our app to deliver high availability, low latency, consistent performance, and scalable capacity.”

THE SOLUTION

Teamworks required a modernized software architecture to improve performance efficiency and scalability.

nClouds partnered with Teamworks to build out an Amazon EKS cluster in the staging environment using Terraform, and perform infrastructure buildout and migration in the prod environment.

Teamworks’ existing application stack was migrated from Amazon Elastic Compute Cloud (Amazon EC2) to Amazon EKS, following best practices for migrating, configuring, and deploying applications to Kubernetes.

Ready to Accelerate?

No matter where you are in your cloud journey, we can help you migrate, modernize, and manage your AWS environment. Let’s accelerate your growth and fast-track your business outcomes.