nClouds AWS Case Study

Transforming Luxury Transportation with Conversational AI

How nClouds helped a premium mobility provider reinvent ride management with Amazon Lex

Benefits Summary

Cost Efficiency: TCO and Operational Benefits

The fully serverless nature of the implementation was pivotal in controlling cost. Leveraging Amazon Lex, Lambda, and RDS Multi-AZ, the company avoided costly overprovisioning and infrastructure maintenance.

TCO Highlights:

- Pay-as-you-go pricing aligned directly with usage spikes and seasonal demand.

- Minimal DevOps burden — no EC2 management, patching, or autoscaling required.

- Faster time-to-value, with the first version of the assistant deployed in under six weeks.

Interested in additional services from nClouds?

Challenge

The transportation provider operated a customer support model centered around email communication and live agents to manage ride scheduling, changes, and cancellations. While effective for handling complex or VIP-specific requests, the model became increasingly strained during high-volume periods. Key pain points included:

- Long wait times during peak travel hours and holidays.

- Operational inefficiency caused by repetitive, low-complexity inquiries.

- Limited scalability, with high staffing costs and fixed response windows.

- A lack of intuitive, mobile-first digital engagement channels.

To meet the expectations of a discerning clientele, the company required a Conversational AI solution that could:

- Understand and process natural-language ride management requests.

- Provide real-time updates on itineraries, drivers, and vehicle status.

- Seamlessly integrate with backend systems for secure data access.

- Offer 24/7 availability, high availability, and effortless scalability.

Strategy and Solution

Working closely with AWS and the customer’s product team, nClouds architected and deployed a serverless, end-to-end Conversational AI platform. The solution leveraged native AWS services to ensure operational agility, performance, and cost-effectiveness, without compromising on security or reliability.

Key Functional Objectives

- Replace traditional phone/email support for routine requests with an intelligent chatbot.

-

Ensure that all business logic, identity access, and data retrieval occurred securely and contextually in real time.

-

Enable fast iteration and observability to refine the assistant based on customer behavior and operational feedback.

Core Components

- Amazon Lex (v2): Serves as the NLU (Natural Language Understanding) engine. Lex handles voice and text-based inputs through predefined intents such as BookRide, CancelTrip, ModifyReservation, and UpdatePreferences. The bot is embedded within the customer’s web and mobile applications.

- AWS Lambda: Handles all backend logic. For each intent, a corresponding Lambda function is triggered to validate input, query or update data, and compose personalized responses.

- Amazon RDS (PostgreSQL, Multi-AZ): Maintains critical structured data including trip reservations, customer profiles, and driver schedules. Multi-AZ deployment ensures fault tolerance and failover support.

- Amazon CloudWatch & AWS X-Ray: Provide unified logging, performance monitoring, and distributed tracing to observe and optimize CAI flows in production.

Example Customer Interaction:

Customer: “I need a car to Newark Airport tomorrow at 8 AM.”

- Amazon Lex identifies the intent BookRide and extracts the destination, date, and time.

- Lambda retrieves user preferences, checks for driver and vehicle availability in Amazon RDS, and confirms booking.

- Lex responds: “Your ride to Newark Airport is confirmed for 8:00 AM tomorrow. Your driver is Alex, and the vehicle will be a black SUV.”

This entire flow is completed in under two seconds, without agent intervention.

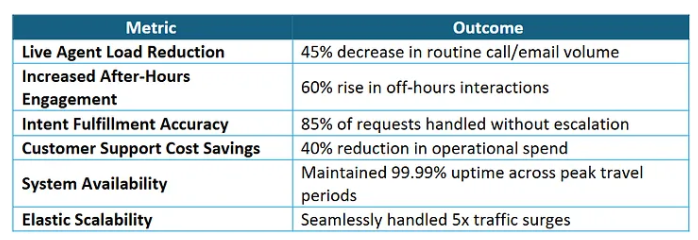

Results + Benefits

nClouds CAI solution delivered measurable results within the first 90 days of production:

Cost Efficiency: TCO and Operational Benefits

The fully serverless nature of the implementation was pivotal in controlling cost. Leveraging Amazon Lex, Lambda, and RDS Multi-AZ, the company avoided costly overprovisioning and infrastructure maintenance.

nClouds helped set up a new GovCloud account for the Aberrant platform, and the environment and pipelines created reduced time to CMMC by about 85%.

TCO Highlights:

- Pay-as-you-go pricing aligned directly with usage spikes and seasonal demand.

- Minimal DevOps burden — no EC2 management, patching, or autoscaling required.

- Faster time-to-value, with the first version of the assistant deployed in under six weeks.

Lessons Learned

This engagement yielded key takeaways relevant for enterprises embarking on CAI transformation:

- Backend Integration is Critical: Accurate, context-rich responses depend on deep connectivity with operational systems.

- Slot Elicitation Enhances User Experience: Lex’s structured dialog features guided users through flows with greater success.

- Monitoring Drives Optimization: CloudWatch and X-Ray were essential for tuning intent flows and identifying failure patterns.

- Trust Comes from Precision: Real-time confirmations built on verified data increased user confidence in the system.

Conclusion

Through close collaboration with AWS and the customer’s internal teams, nClouds delivered a high-impact Conversational AI platform that redefined how clients manage luxury transportation — achieving scalability, 24/7 availability, and a more refined user experience without compromising service excellence.

This project demonstrates the power of Amazon Lex and serverless design in solving real-world, customer-sourced problems while delivering business results at scale.